NVIDIA Launches Vera Rubin Platform: Powering the Next AI Generation with Seven Innovative Chips

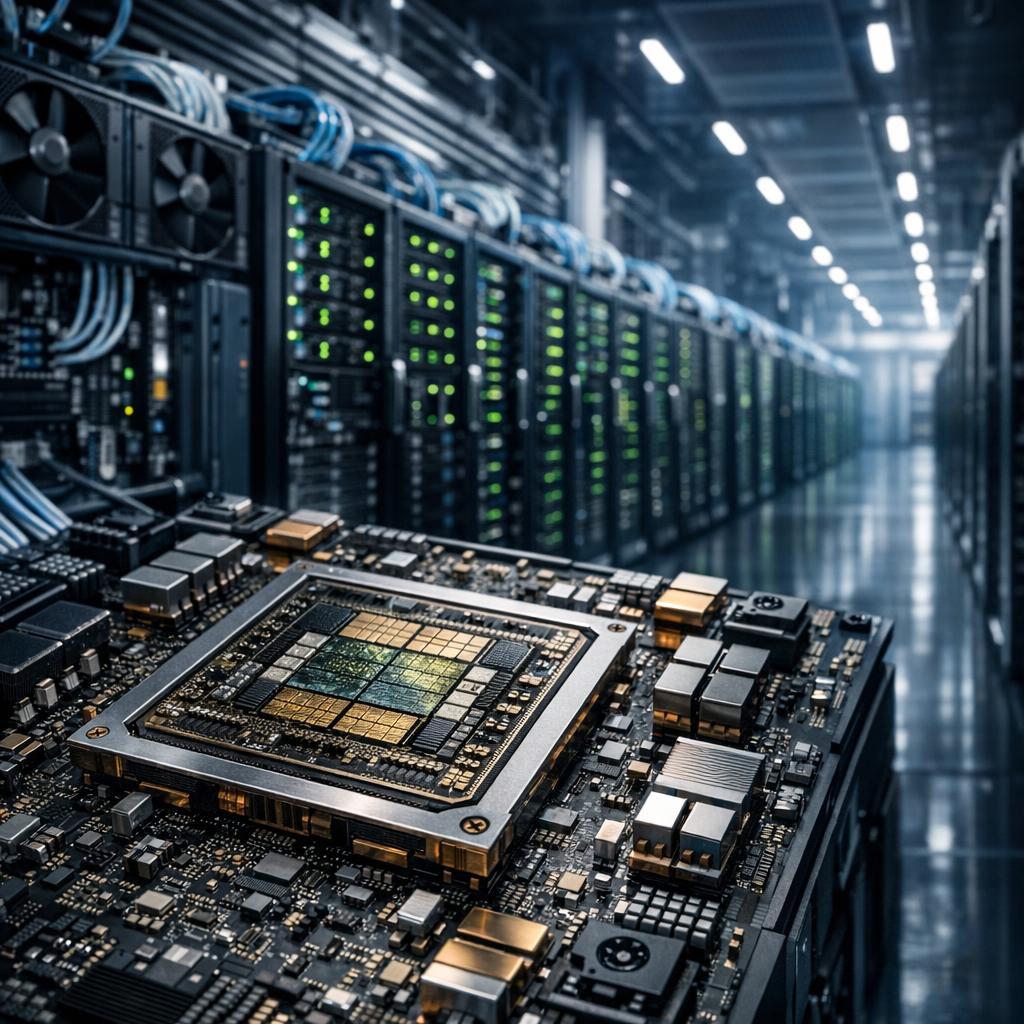

NVIDIA has made a groundbreaking announcement at its GTC 2026 conference. The tech giant revealed that its next-generation Vera Rubin platform is now in full production. This marks a significant leap in AI computing infrastructure. The platform is a combination of seven chips, designed to function together as one colossal AI supercomputer. It is set to power a range of applications, from large-scale training to real-time agentic AI inference.

The Rubin platform boasts an impressive lineup of components. These include the Vera CPU, Rubin GPU, NVLink 6 Switch, ConnectX-9 SuperNIC, BlueField-4 DPU, Spectrum-6 Ethernet Switch, and the newly integrated Groq 3 LPU inference accelerator. As per NVIDIA CEO Jensen Huang, the platform is capable of delivering up to 10x reduction in inference token cost and a 4x reduction in GPUs required to train mixture-of-experts models. This is in comparison to the company’s previous Blackwell platform.

Several major cloud providers have already shown interest in the Vera Rubin platform. AWS, Google Cloud, Microsoft, and Oracle have plans to deploy Vera Rubin-based instances in the latter half of 2026. Microsoft, in particular, is planning to integrate the NVL72 rack-scale systems into its next-generation Fairwater AI superfactory sites. Furthermore, AI labs including OpenAI, Anthropic, Meta, Mistral AI, and xAI are already exploring the platform. They aim to train larger models and serve long-context, multimodal systems at lower latency and cost.

Source: https://nvidianews.nvidia.com/news/nvidia-vera-rubin-platform